Case Study: Oracle Private Cloud Appliance — Console for Mission-Critical Infrastructure

Senior frontend engineer on the management console for Oracle's Private Cloud Appliance — the same infrastructure that powers NASA's Deep Space Network, communicating with the James Webb Space Telescope, Voyager probes in interstellar space, and Mars rovers on the surface of another planet. I led a console-wide migration to a config-driven TypeScript architecture, built shared components across 12+ micro-frontends, and delivered Disaster Recovery capabilities for operators managing high-availability infrastructure.

The Product

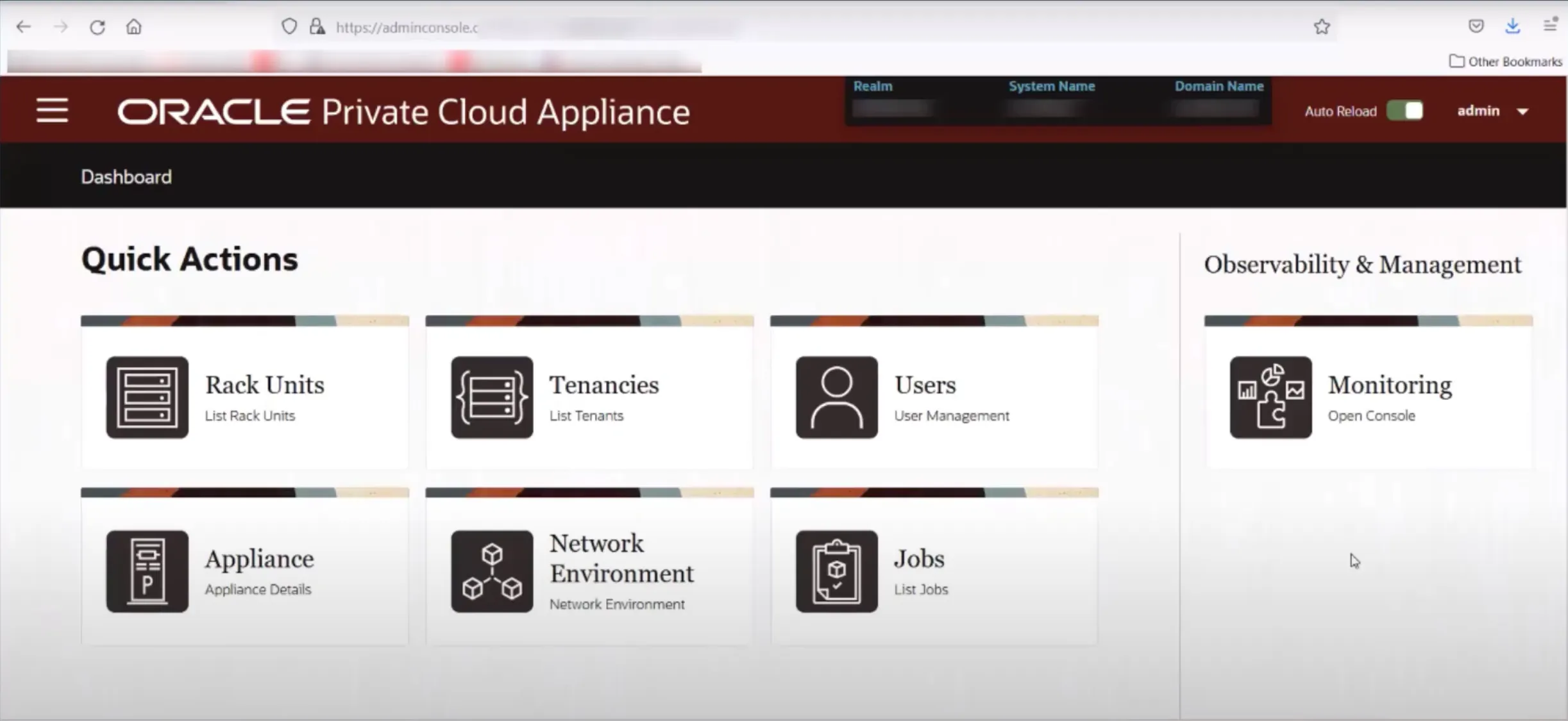

Oracle Private Cloud Appliance (PCA) is a rack-scale, fully integrated cloud infrastructure system that runs OCI compute, storage, and networking services inside a customer's own data center. It's designed for organizations that need cloud capabilities but can't — or won't — put their workloads on the public internet: government agencies, defense contractors, financial institutions, and research organizations with strict data sovereignty and security requirements.

Each appliance supports up to 24 compute nodes with 768 GB RAM per node, running hundreds of virtual machines managed through a unified console. The same APIs, CLI, and SDK available in Oracle Public Cloud work identically on PCA, giving operators a consistent experience whether they're managing workloads in a public OCI region or in an air-gapped facility.

Where PCA Runs

NASA's Jet Propulsion Laboratory operates seven PCAs across five locations — Madrid, Canberra, Goldstone, JPL headquarters, and a test facility in Monrovia, California — replacing approximately 300 Sun Microsystems servers with 300+ virtual machines. These appliances power the Deep Space Network, which connects Earth with 40 spacecraft beyond Earth's orbit: the $10 billion James Webb Space Telescope, two Voyager probes in interstellar space, Mars rovers and orbiters, and upcoming Artemis lunar missions. PCA also runs NASA's hydrology applications mapping water availability across the western United States, and the Solar System Treks platform that produces GIS surface maps of the Moon, Mars, Mercury, and moons of Saturn and Jupiter.

The console I worked on is the interface that operators at JPL, Goldstone, Madrid, and Canberra use to provision compute, configure networks, manage rack units, and maintain the virtual machines that keep these missions running. Any outage in communications with spacecraft — or with astronauts on future Artemis missions — is unacceptable. The management console needs to be reliable, accessible, and operationally correct. (Oracle Connect)

My Role

As a Senior Software Engineer (IC3) on the OCI Private Cloud Appliance team, I owned frontend architecture decisions for the PCA management console and delivered features used by operators handling high-impact infrastructure workflows.

Config-Driven Console Migration

The PCA console on OCI had grown to 15+ screens across compute, storage, networking, and administration domains. Each screen had been implemented independently, resulting in inconsistent patterns, duplicated logic, and high cost to extend. I led the migration to a configuration-first TypeScript framework where screen layout, field validation, and API bindings are declared as typed configuration objects rather than hand-coded per screen. This eliminated a category of one-off UI variations and made it possible to ship new management screens by composing existing building blocks instead of writing from scratch.

Feature-Gating Across 20+ Endpoints

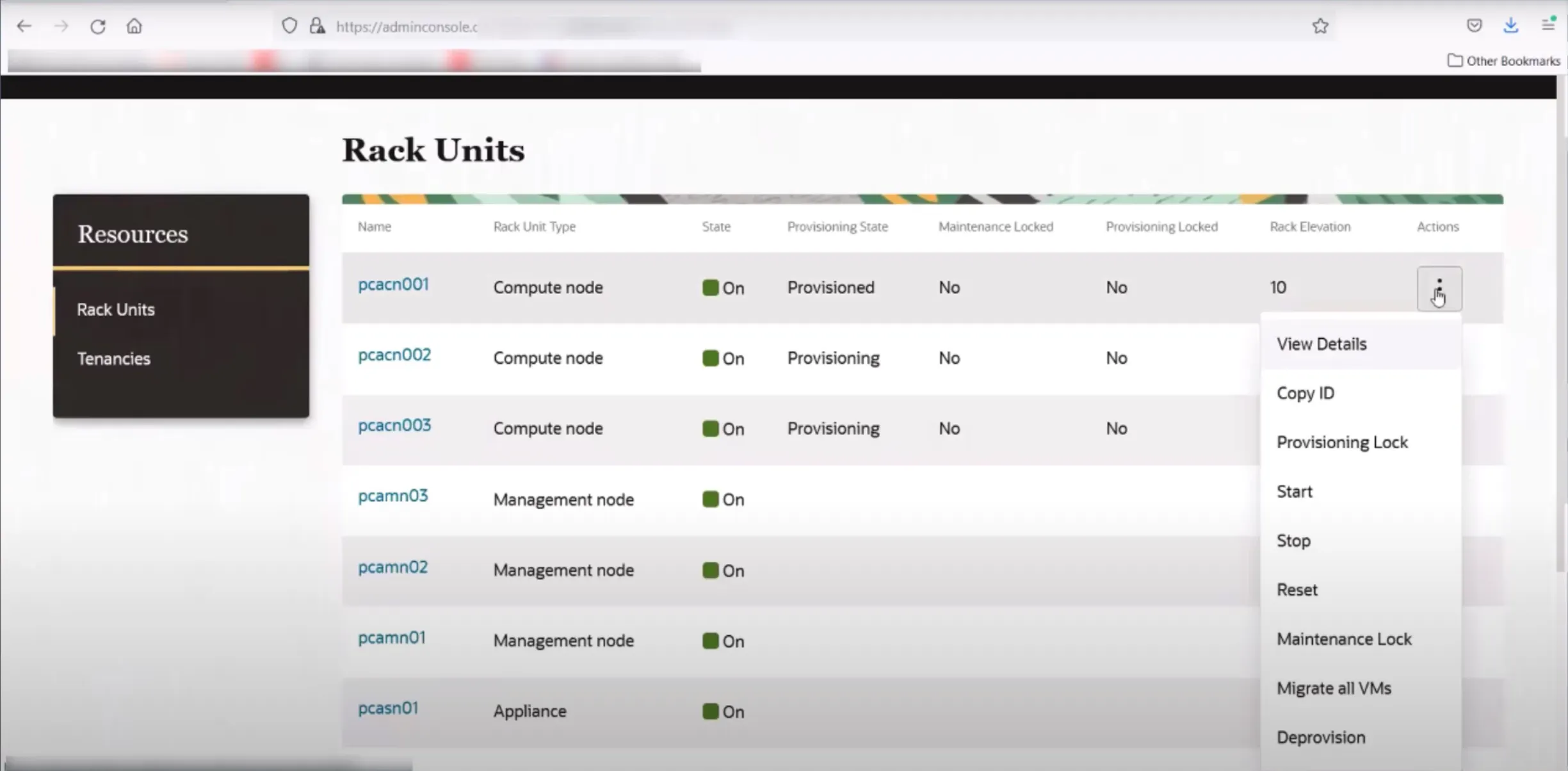

PCA ships as an appliance with versioned firmware, and not every backend capability is available on every firmware version. I standardized feature-gating behavior across 20+ backend endpoints so the console dynamically adapts its UI based on the appliance's actual capabilities. Features that aren't supported on the running firmware are hidden or disabled — not shown with confusing error states. This prevents operators from attempting actions their appliance can't perform, which matters when a misconfigured operation could affect the availability of hundreds of virtual machines.

Shared Components Across 12+ Micro-Frontends

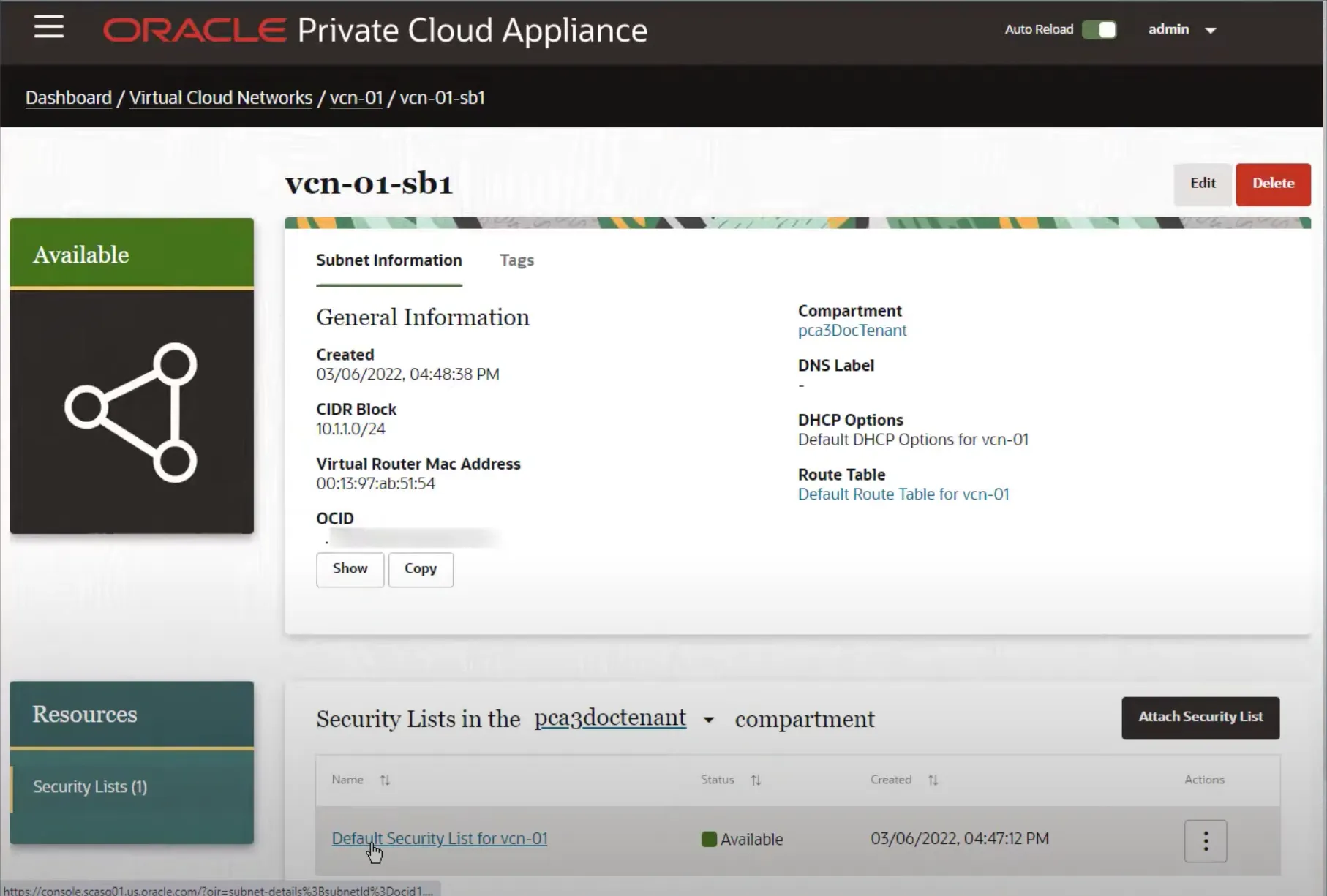

The OCI console is composed of micro-frontends, each owned by a different service team. I built shared React/TypeScript and Oracle JET components — data tables, detail views, action menus, configuration wizards — that are consumed across 12+ micro-frontends. The component contracts enforce consistent interaction patterns, accessibility defaults, and visual behavior so that operators experience a unified console regardless of which service team built the underlying screen.

Disaster Recovery and Replication

I delivered the user-facing capabilities for Disaster Recovery and Replication — the workflows operators use to configure, monitor, and failover replicated infrastructure between PCA appliances. For a product where customers include NASA and financial institutions, DR isn't a checkbox feature. It's core infrastructure that operators need to trust with the availability of their most critical workloads.

Engineering Challenges

Depth Without Friction

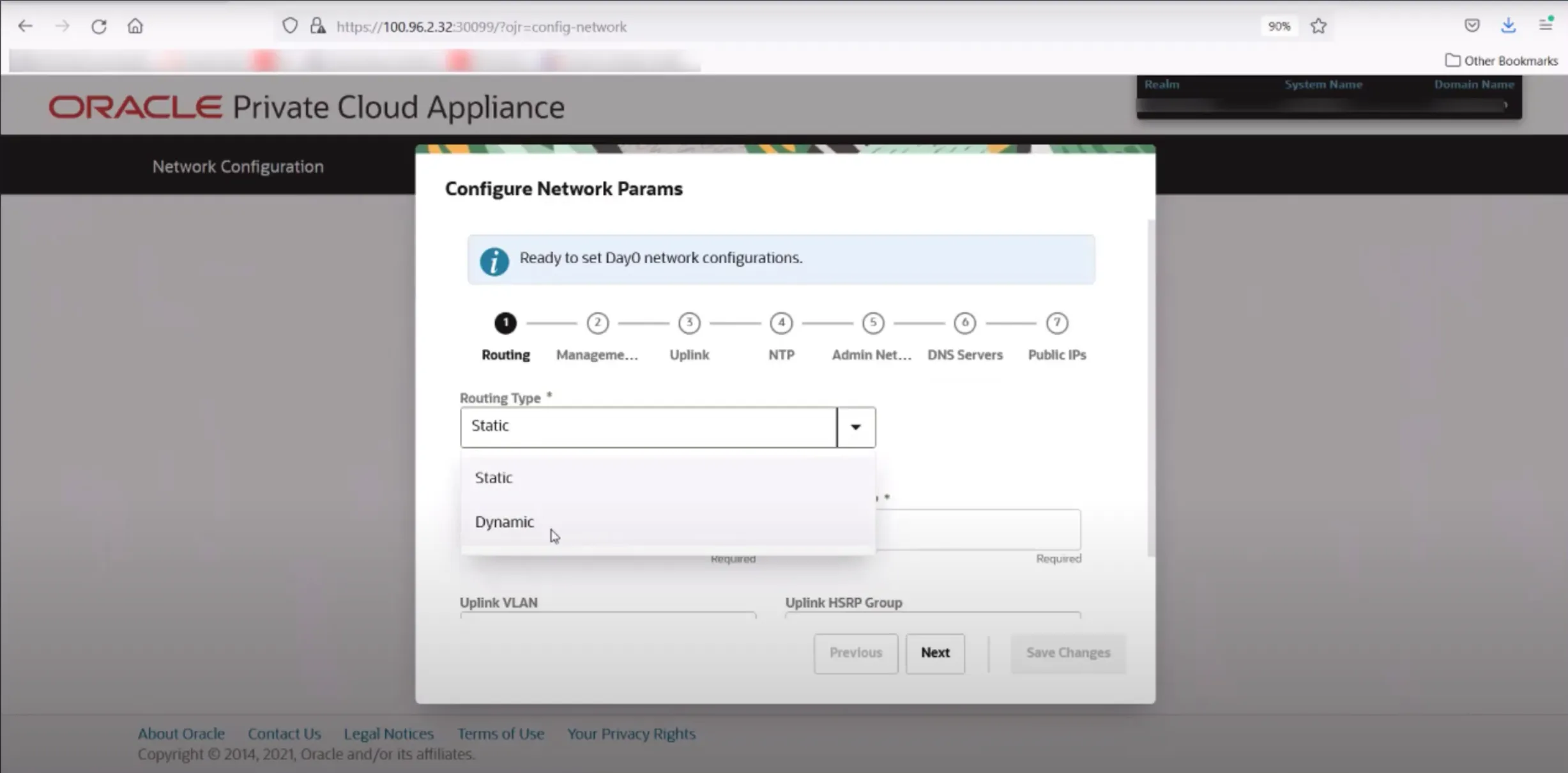

Enterprise cloud management requires dense information presentation — rack unit status, VM lifecycle state, network topology, storage allocation, security lists — while keeping advanced operations discoverable. The challenge was simplifying interactions without hiding capability. An operator provisioning a compute node or configuring Day-0 networking on a new appliance needs every option available, but the interface can't overwhelm someone performing routine maintenance. The config-driven architecture helped here: the same framework renders both the full-complexity provisioning wizard and the simplified monitoring dashboard, adapting density to the task.

Consistency Across a Micro-Frontend Ecosystem

When 12+ teams ship independently into a shared console, visual and behavioral drift is inevitable without shared infrastructure. The components I built weren't optional recommendations — they were the building blocks teams used to assemble their screens. This earned adoption through developer experience: using the shared components was faster than rolling custom implementations, and the accessibility and UX defaults came for free.

Firmware-Aware UI

Unlike a SaaS product where the backend is always the latest version, PCA appliances run specific firmware releases and can't be upgraded on the customer's schedule. The console needs to work correctly against any supported firmware version, gracefully degrading features that aren't available. This required a capability-discovery layer that probes the appliance at load time and conditionally renders the UI — a fundamentally different problem from typical feature flagging.

Outcomes

- ~20% reduction in integration effort through the config-driven migration — new console screens built by composing typed configuration rather than custom implementation.

- Consistent operator experience across 12+ micro-frontends through shared component contracts with built-in accessibility and UX defaults.

- Reliable feature-gating across 20+ endpoints, preventing operators from encountering broken or unsupported functionality on their firmware version.

- DR capability delivery — replication and failover workflows trusted by organizations where infrastructure downtime has mission-critical consequences.

Console screenshots are from Oracle's public PCA documentation. NASA context sourced from Oracle Connect.

Project information

- Category: Enterprise Cloud Infrastructure

- Role: Senior Software Engineer (IC3), OCI Private Cloud Appliance

- Employer: Oracle Corporation

- Timeframe: 2024 - 2025

- Technologies: TypeScript, React, Oracle JET, Micro-Frontends, Config-Driven Architecture, WCAG/Section 508, Feature Gating, CI/CD

- Oracle Private Cloud Appliance

- Console UI is internal to Oracle.