Case Study: AI-Powered Compliance Automation for Quorum

Result: Reduced manual compliance data entry by automating document-to-field extraction with full audit traceability. Every AI output is linked to specific coordinates in the source document. Workflow state persists across page refreshes, navigation, and session interruptions with no user progress lost.

I was contracted to design and build the AI workflow layer for Quorum, a food safety SaaS platform that automates supplier onboarding and compliance verification for QA teams.

Food safety suppliers operate under FDA FSMA, SQF, and GFSI audit frameworks where a single non-conforming record can trigger a facility hold. Quorum needed to turn unstructured supplier PDFs into verified database records, and every AI-generated field had to be traceable back to a specific location in the source document. There was no room for a black-box AI that QA teams couldn't audit.

The existing application already had a document ingestion pipeline and a form field matching system. My scope was the AI intelligence layer: the workflow controller, the chat interface, the context system that connects the two AI surfaces, and the automated compliance pipeline that coordinates them.

Engineering Challenges

Workflow State and the Gatekeeper

Supplier onboarding involves multiple distinct phases, and each one requires different context, different agent capabilities, and different validation rules. A naive implementation would re-prompt the LLM with full conversation history at every turn. That fails for three reasons: token costs grow linearly with conversation length, context from one phase leaks into the next, and the user loses all progress if they refresh the page or navigate away.

I designed and built a persistent state-machine controller that decouples workflow logic from the LLM prompt entirely. The controller tracks the current phase and candidate set in the database. When a user returns, it reconstructs exactly where they left off and grants the agent only the capabilities appropriate for that phase. The LLM handles natural language interaction. The controller handles process control. Neither depends on the other's internal state, which means you can swap models, change prompts, or add new workflow phases without breaking the progression logic.

The controller ships with a comprehensive unit test suite covering phase transitions, capability grants, and edge cases.

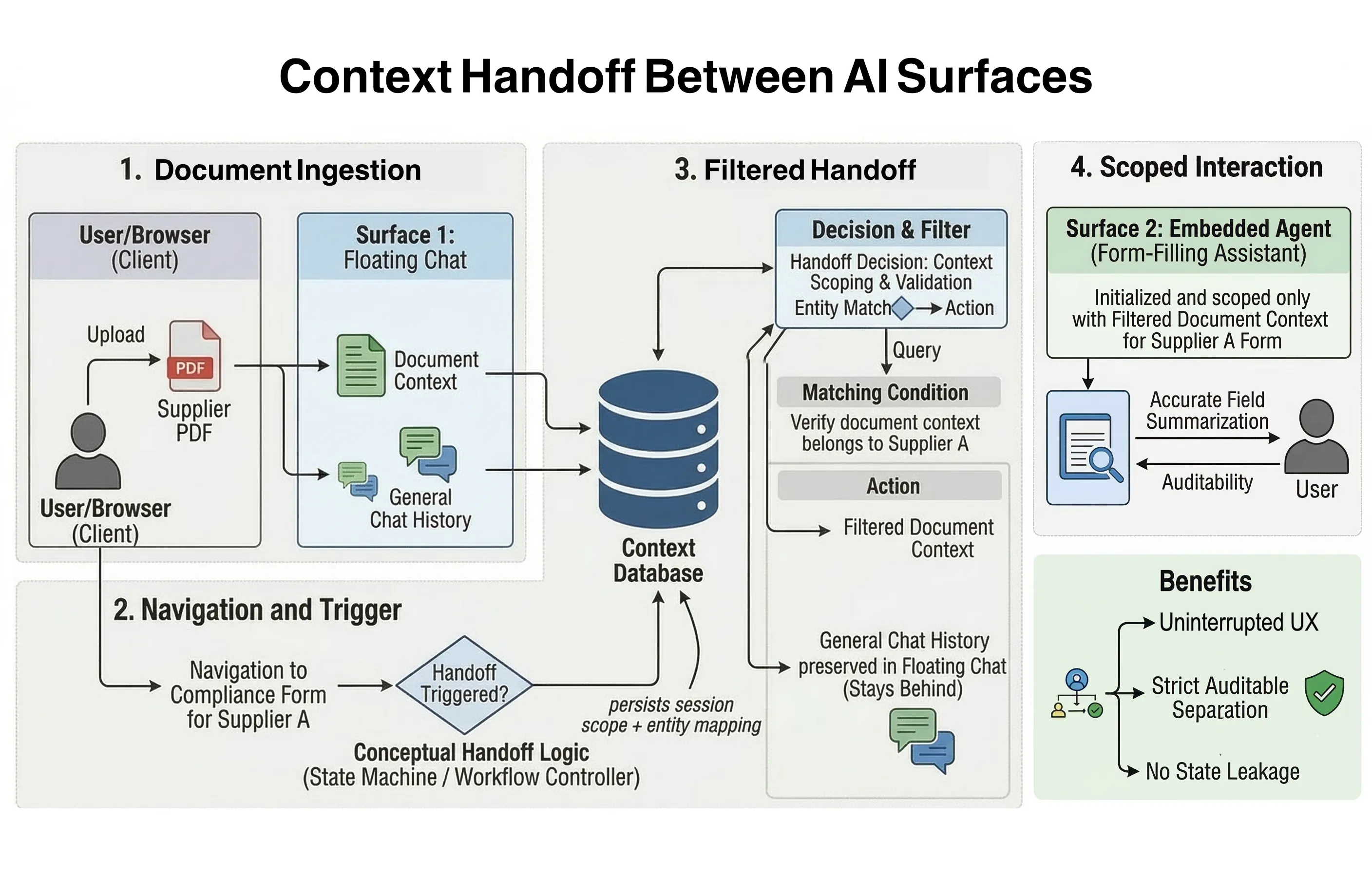

Context Handoff Between AI Surfaces

The platform has two distinct AI surfaces: a floating chat overlay accessible from any page in the application, and an embedded guidance agent scoped to individual compliance submissions. Users start in the floating chat (uploading documents, identifying suppliers, setting up new entities) and then transition to the embedded agent to complete a specific compliance form.

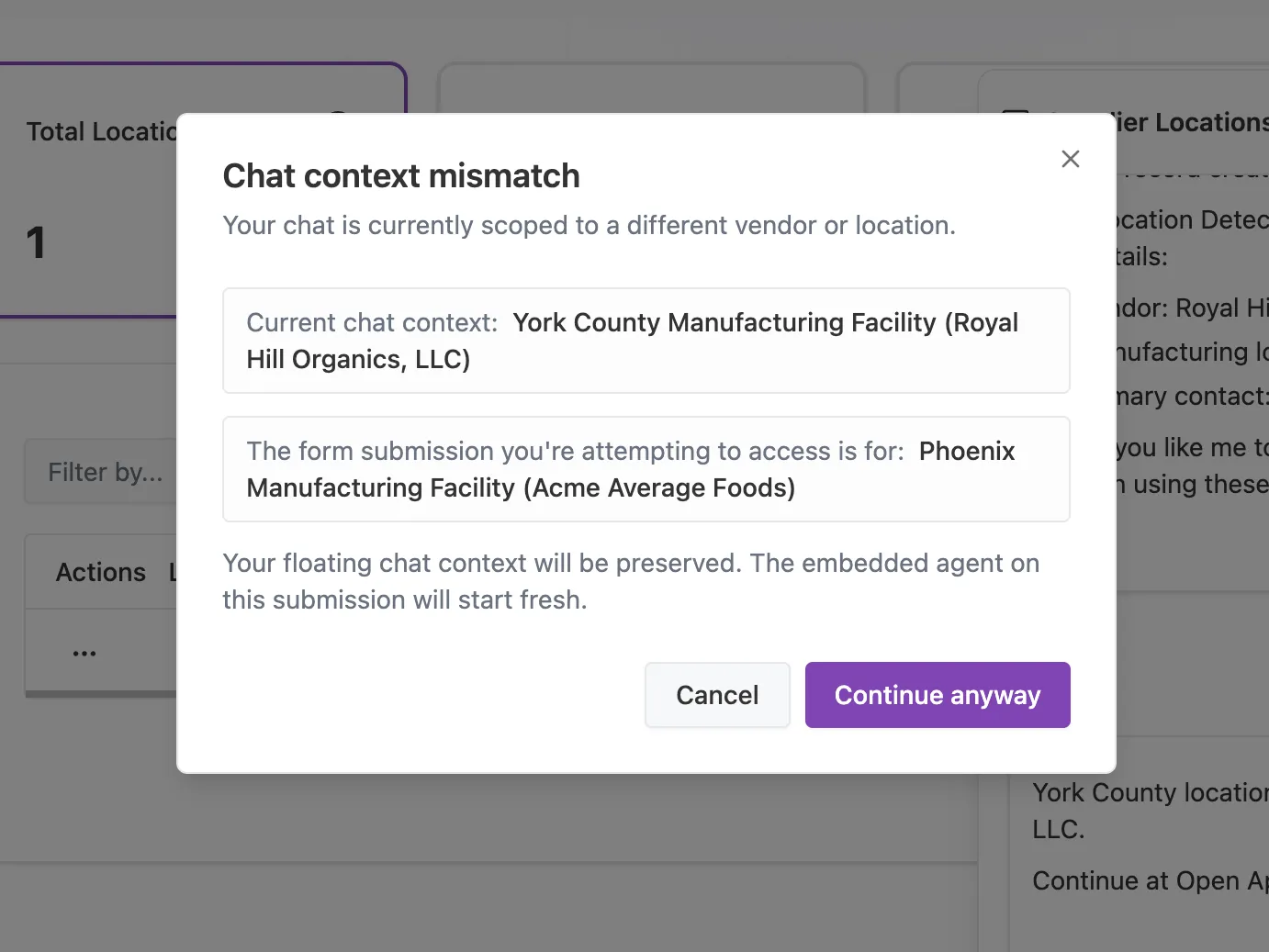

This handoff is deceptively hard. The embedded agent needs the document context from the floating chat, but it shouldn't inherit unrelated conversation history. If the user navigates to a different supplier's form, stale context from the previous session shouldn't contaminate the new one. And if the user goes back to the floating chat, their original conversation should still be there.

I built a context-scoping system that tracks which supplier and facility each chat session belongs to. On navigation, the system compares the target entity against the current chat context. If there's a mismatch, the user is warned and offered a clean start while the floating chat state is preserved independently. Handoff only occurs for explicitly approved submissions, preventing accidental context leakage between unrelated compliance workflows.

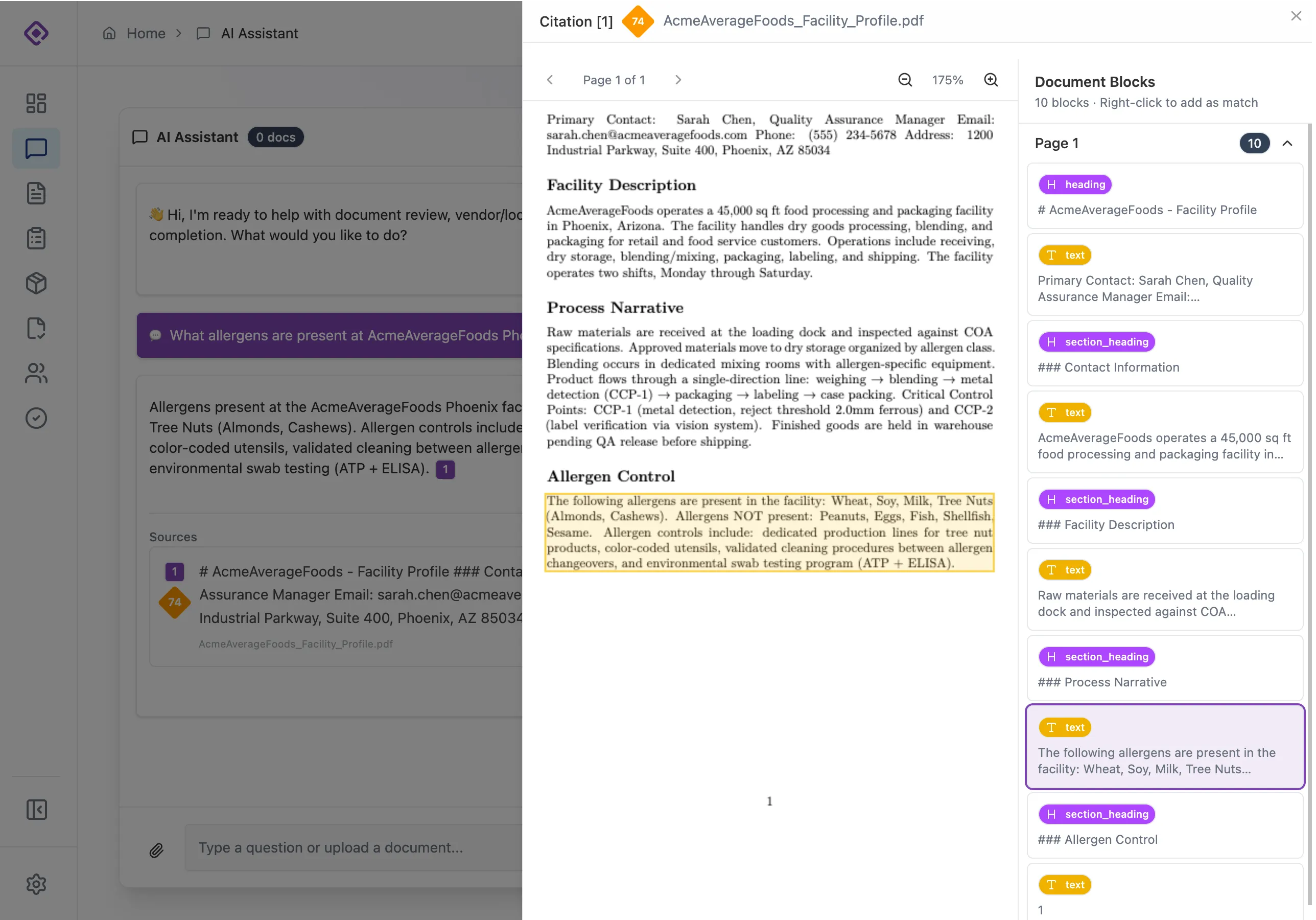

Auditability and Citations

In a compliance context, "the AI filled in this field" is not an acceptable answer during an audit. The platform already had block-level document references for its field matching pipeline. I extended this into the chat agent by building an inline citation framework where every claim the AI makes in conversation is linked to a specific block and coordinate in the source PDF. Retrieval results include provenance metadata so QA reviewers can click any citation badge and jump directly to the evidence in the original document. The system assists the reviewer. It never replaces their judgment.

Least-Privilege Agent Capabilities

The agent uses a dynamic capability-injection pattern. Depending on the current page and the user's progress in the workflow, the controller grants access to specific operations: database queries, external lookups, entity creation, or record updates. Capabilities not relevant to the current phase are never loaded. This reduces the attack surface, cuts token overhead, and prevents the LLM from taking actions outside its current scope.

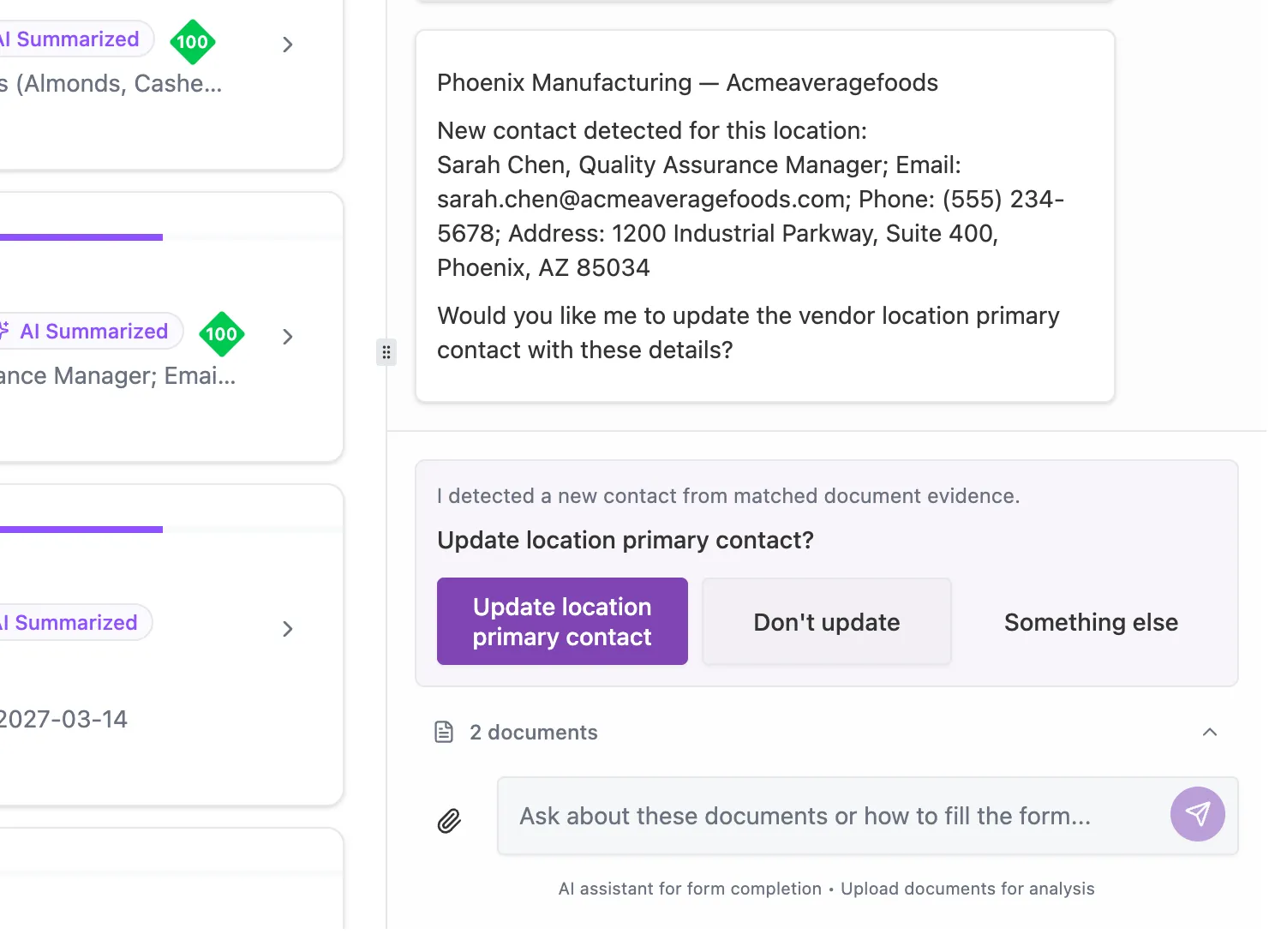

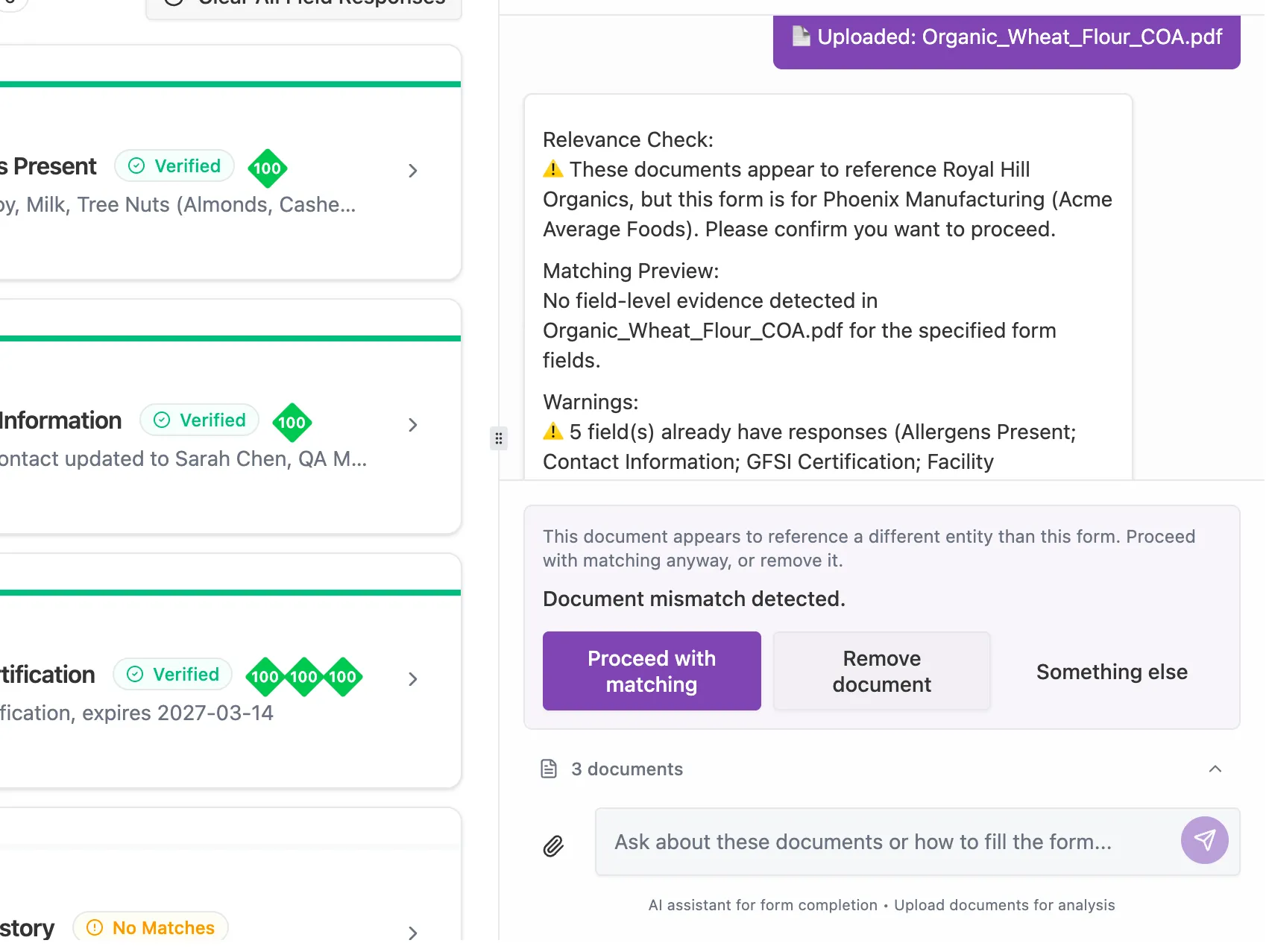

Automated Compliance Pipeline

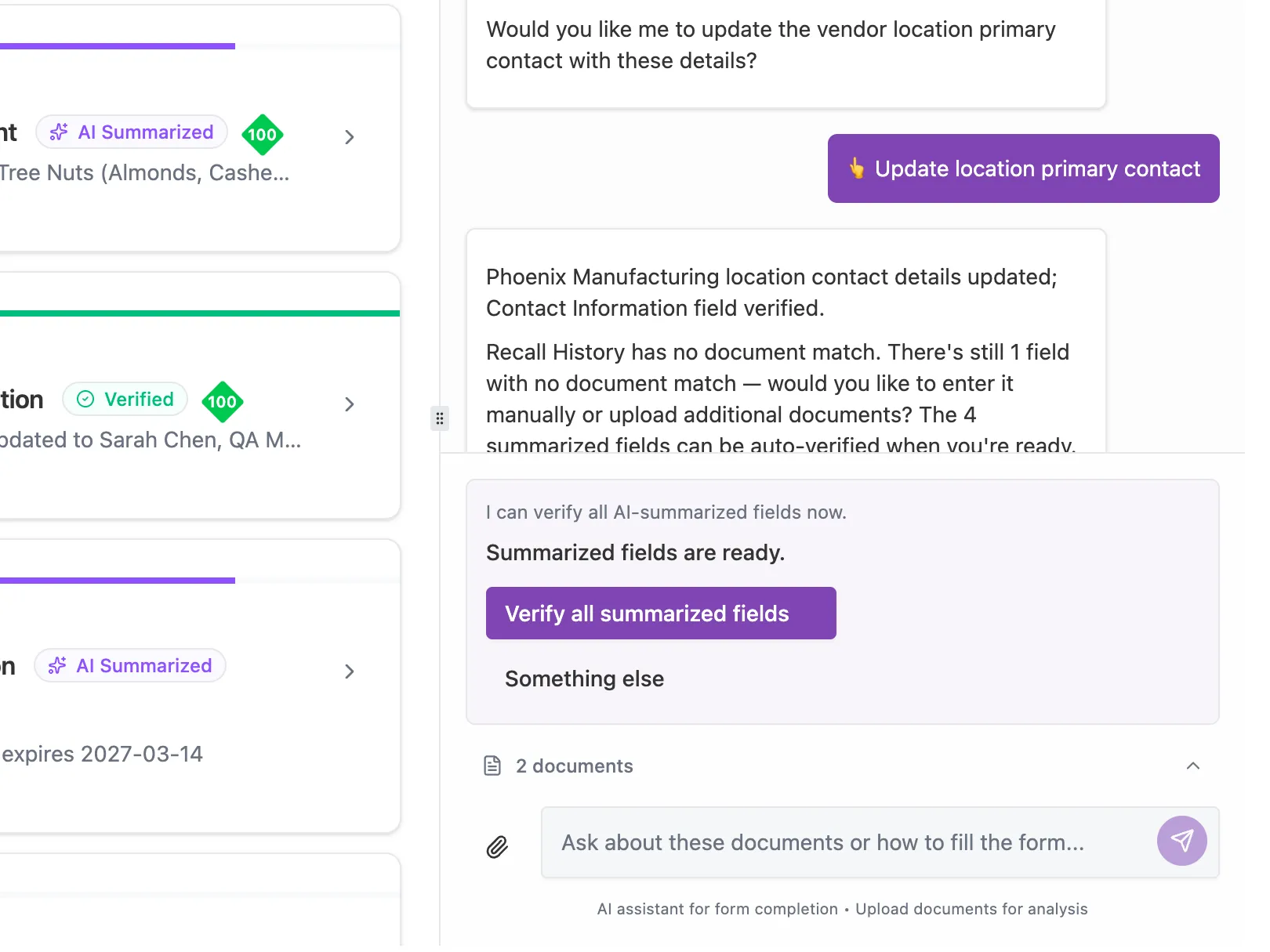

The core business value is reducing manual data entry. This comes from a multi-stage automation pipeline built into the embedded guidance agent. The agent validates uploaded documents for relevance, extracts and populates form fields with AI-drafted values, detects new or changed contact information against existing records, and handles bulk field review and approval workflows. Every stage has explicit confirmation gates. The agent proposes. The user decides.

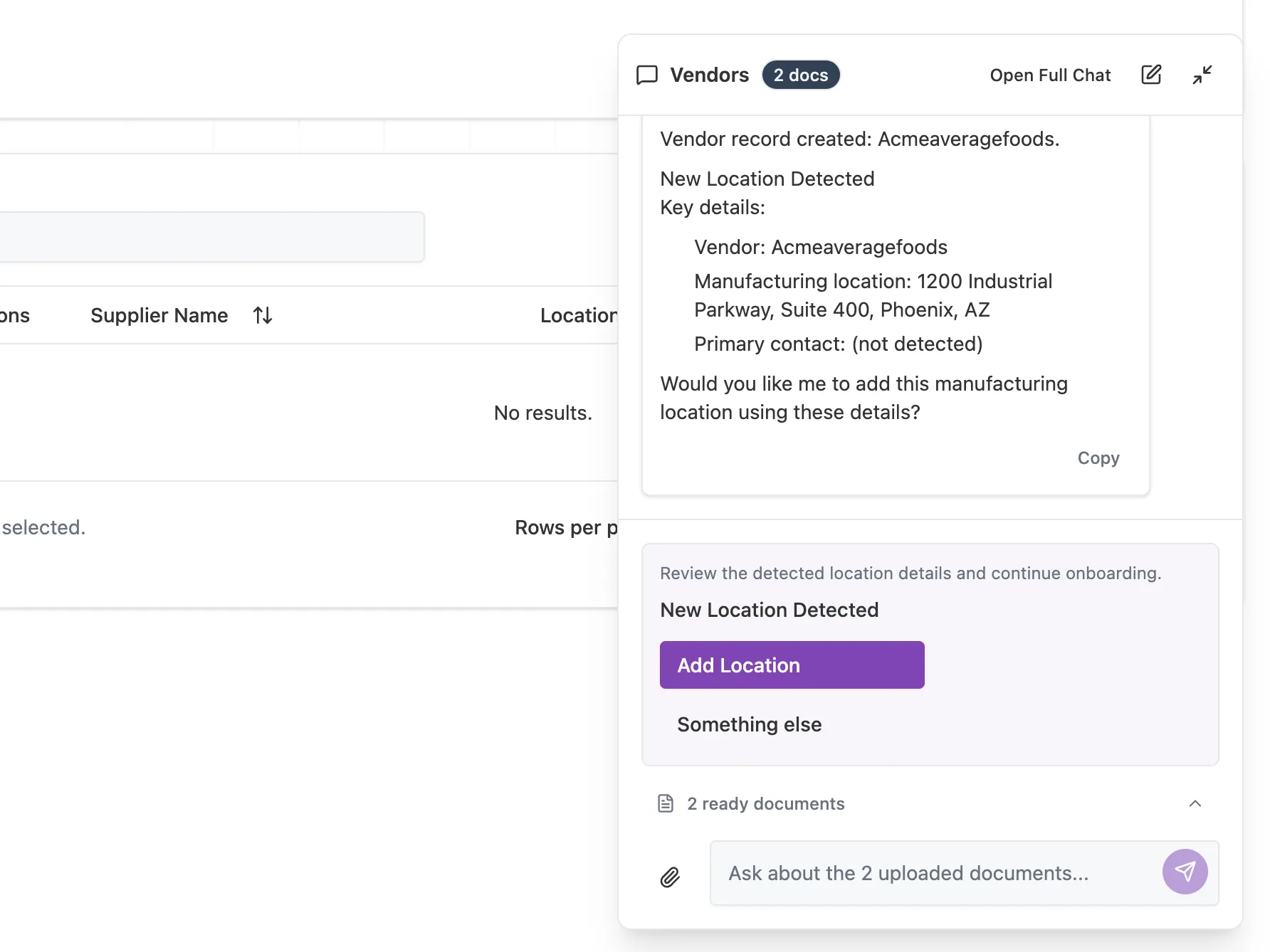

Supplier Onboarding

When a user uploads supplier documents, the agent scans for contact information and facility addresses not already on file. If no matching supplier exists, the user can create one directly from the chat, including locations, products, and entity linkages, without leaving the conversation. I also identified and fixed legacy database issues that had been silently blocking parts of the onboarding workflow across the entire platform. Every creation action requires explicit user confirmation before any write occurs.

Full-Stack Delivery

Serverless Functions: I wrote and modified multiple serverless edge functions for document classification, document parsing (adding Markdown and plaintext support alongside the existing PDF pipeline), embedding generation, and automated field processing. These run as serverless workers consuming from job queues.

Infrastructure: Designed the database schema for workflow persistence and optimized document traversal for retrieval-augmented generation, including entity triggers and job scheduling.

Performance Under LLM Load: Adding AI chat features to an existing single-page application risks degrading the baseline experience. I audited the build pipeline, implemented Vite dependency pre-bundling for heavy AI libraries, built a lazy-loading Markdown renderer that defers rendering dependencies until content is actually displayed, and refactored barrel-file imports across the layout and chat components. I enforced this pattern with a custom ESLint rule. These changes delivered a 40%+ improvement in Time to Interactive, keeping the app responsive under the added weight of LLM-powered features. The AI assistant is built on Svelte 5 reactive primitives with status polling that never blocks the main UI thread.

Outcomes

- Over 70% reduction in manual compliance data entry through document-to-field extraction with explicit user verification at every stage.

- Full audit trail: every AI output, in both the chat agent and the automated compliance pipeline, is backed by cited, coordinate-level references to the source document.

- Persistent workflow state: multi-step supplier onboarding survives page refreshes, navigation, and session interruptions. Context handoff between the floating chat and embedded form agent preserves progress without leaking state between unrelated entities.

- 40%+ improvement in Time to Interactive: dependency pre-bundling, lazy loading, and import refactoring kept the app responsive despite the addition of LLM-powered features.

Project Information

- Category: AI/Compliance Automation (Contract)

- Role: Lead Engineer, AI Workflow & Infrastructure

- Client: Quorum Food Safety Solutions

- Timeframe: 2026

- Core Deliverable: Automated Supplier Onboarding & Document Verification

Technical Stack

- Frontend: SvelteKit (Svelte 5 Runes), TypeScript, Tailwind CSS

- AI/LLM: Vercel AI SDK, OpenAI (GPT-4o), Perplexity API

- Backend & Data: Supabase (PostgreSQL), Edge Functions, PGMQ (Job Queues)

- Testing & Tooling: Vitest, Vite (Custom Performance Warmup), ESLint (Import Guarding)